What you will learn from this course

- Use logistic regression, naïve Bayes, and word vectors to implement sentiment analysis, complete analogies, and translate words, and use locality sensitive hashing for approximate nearest neighbors.

- Use dynamic programming, hidden Markov models, and word embeddings to autocorrect misspelled words, autocomplete partial sentences, and identify part-of-speech tags for words.

- Use dense and recurrent neural networks, LSTMs, GRUs, and Siamese networks in TensorFlow and Trax to perform advanced sentiment analysis, text generation, named entity recognition, and to identify duplicate questions.

- Use encoder-decoder, causal, and self-attention to perform advanced machine translation of complete sentences, text summarization, question-answering and to build chatbots. Models covered include T5, BERT, transformer, reformer, and more!

- Learn to extract features from text into numerical vectors

- Learn the theory behind Bayes’ rule for conditional probabilities

- Learn how to create word vectors that capture dependencies between words

- Learn to transform word vectors and assign them to subsets using locality sensitive hashing

- Learn about autocorrect, minimum edit distance, and dynamic programming

- Learn about Markov chains and Hidden Markov models

- Learn about how N-gram language models work by calculating sequence probabilities

- Learn about how word embeddings carry the semantic meaning of words

- Learn about the limitations of traditional language models

- Learn about how long short-term memory units (LSTMs) solve the vanishing gradient problem

- Learn about Siamese networks

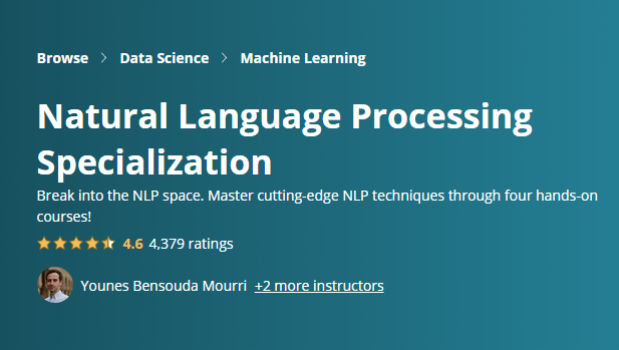

This Specialization is designed and taught by two experts in NLP, machine learning, and deep learning. Younes Bensouda Mourri is an Instructor of AI at Stanford University who also helped build the Deep Learning Specialization. Łukasz Kaiser is a Staff Research Scientist at Google Brain and the co-author of Tensorflow, the Tensor2Tensor and Trax libraries, and the Transformer paper.

Can I download Natural Language Processing Specialization course?

You can download videos for offline viewing in the Android/iOS app. When course instructors enable the downloading feature for lectures of the course, then it can be downloaded for offline viewing on a desktop.Can I get a certificate after completing the course?

Yes, upon successful completion of the course, learners will get the course e-Certification from the course provider. The Natural Language Processing Specialization course certification is a proof that you completed and passed the course. You can download it, attach it to your resume, share it through social media.Are there any other coupons available for this course?

You can check out for more Udemy coupons @ www.coursecouponclub.com

Note: 100% OFF Udemy coupon codes are valid for maximum 3 days only. Look for "ENROLL NOW" button at the end of the post.

Disclosure: This post may contain affiliate links and we may get small commission if you make a purchase. Read more about Affiliate disclosure here.

Disclosure: This post may contain affiliate links and we may get small commission if you make a purchase. Read more about Affiliate disclosure here.